Table of Contents

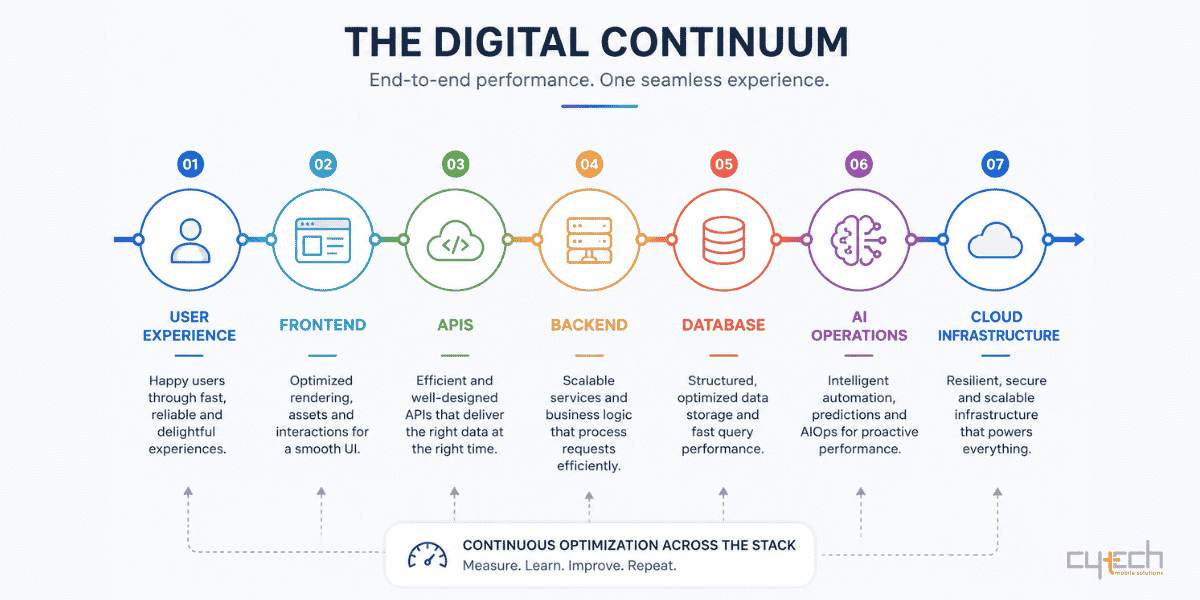

In 2026, software performance is no longer a competitive advantage reserved for leading companies. It has become a fundamental requirement of digital business identity. Applications now shape customer experience, enable decision-making, support automation, and protect data. As systems evolve into complex ecosystems of microservices, APIs, serverless functions, and edge-native infrastructure, performance must be viewed as a complete digital continuum: from frontend responsiveness to backend throughput and infrastructure resilience.

Performance optimization is therefore both a technical and strategic priority. It directly affects operational stability, scalability, infrastructure cost, and innovation capacity. In distributed architectures, even small inefficiencies can become critical during traffic spikes. Poor performance also increases technical debt, slows development velocity, and raises maintenance costs. Modern organizations increasingly adopt a systems-thinking culture where frontend, backend, APIs, databases, caching, and AI workflows are optimized together.

Frontend Performance: Engineering for Human Perception

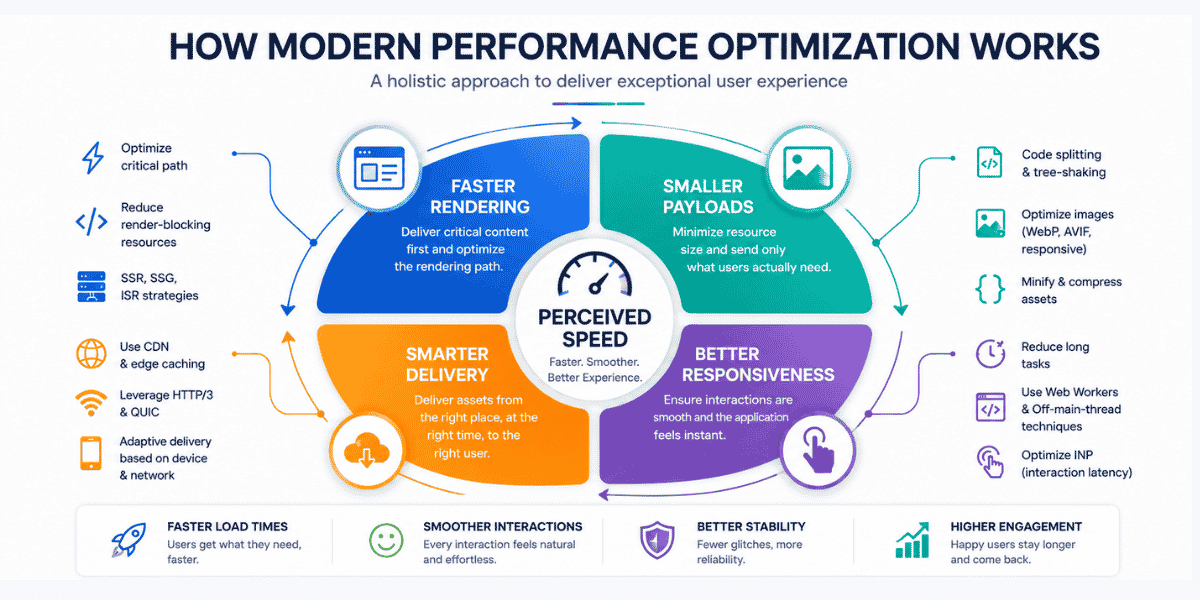

Frontend performance in 2026 is centered on experience engineering. Applications are expected not only to load quickly but also to feel smooth, responsive, and reliable throughout the entire user journey.

Google’s Core Web Vitals remain one of the main benchmarks for measuring user experience quality, with increasing emphasis on real-world user data instead of only synthetic testing.

| Metric | What It Measures | Ideal Threshold | Optimization Focus |

|---|---|---|---|

| Largest Contentful Paint (LCP) | Main content loading speed | < 2.5s | CDN usage, image optimization, preloading |

| Interaction to Next Paint (INP) | Responsiveness to interactions | < 200ms | Breaking long tasks, web workers |

| Cumulative Layout Shift (CLS) | Visual stability | < 0.1 | Reserved media space, stable layouts |

The replacement of First Input Delay with INP reflects a major shift in performance philosophy. Instead of measuring only the first interaction, INP evaluates responsiveness throughout the entire session, making it more suitable for modern single-page applications.

Rendering strategies such as Server-Side Rendering, Static Site Generation, and Incremental Static Regeneration remain important because they deliver critical HTML earlier and improve perceived speed. Critical Rendering Path optimization also plays a key role. Above-the-fold content should load first, non-critical JavaScript should be deferred, and CSS should be optimized to prevent render-blocking behavior.

Code splitting and tree-shaking are equally essential. These techniques reduce JavaScript bundle size by ensuring users download only the code they actually need. Modern CSS features such as content-visibility: auto can also reduce rendering workload by delaying off-screen content processing.

Media optimization remains one of the largest frontend priorities because images and videos often account for 50–60% of total page weight. Modern formats such as WebP and AVIF can reduce image size by 25–40%, while responsive image delivery through srcset improves device-specific loading. Native lazy loading and explicit image dimensions also help maintain strong LCP and CLS scores.

Backend Throughput and Scalability

Backend performance determines whether applications can handle high traffic volumes, process data efficiently, and maintain low latency under pressure. In 2026, backend systems are increasingly distributed, cloud-native, and modular.

Databases are often the main bottleneck because disk-based operations are significantly slower than memory-based processing. Effective optimization starts with indexing frequently queried columns and profiling slow queries using tools such as MySQL EXPLAIN or PostgreSQL profiling systems. Poor practices such as inefficient joins and unnecessary SELECT * queries can dramatically reduce throughput.

Database sharding and partitioning are increasingly used to distribute workloads across multiple nodes, preventing individual servers from becoming constraints. Asynchronous processing is also essential. Heavy tasks should be moved away from the main execution thread using task queues such as RabbitMQ or Celery, allowing APIs to respond immediately while background operations continue independently.

Technology selection also influences performance. Go is often preferred for high-throughput APIs because of its lightweight concurrency model. Python with FastAPI remains important for AI and data-intensive systems. Node.js continues to dominate I/O-heavy real-time applications, while Java with Spring remains a strong choice for enterprise systems requiring long-term stability.

This shift toward modular architecture also reduces technical debt. Organizations increasingly move away from rigid monolithic systems toward scalable infrastructures that support long-term innovation. Cytech’s transition from legacy SMS gateways to open messaging infrastructure such as Sendium reflects this broader industry trend toward flexibility and sustainable scalability.

Caching, APIs, and Data Transfer

Caching is now considered a strategic requirement rather than a simple backend optimization. Serving data from memory can be 10–100 times faster than retrieving it from disk-based databases or external APIs.

| Layer | Technique | Main Benefit |

|---|---|---|

| Caching | Cache-aside, TTL, refresh-ahead | Faster access and reduced DB load |

| APIs | BFF or GraphQL | Reduced over-fetching and optimized payloads |

| Transport | HTTP/3 with QUIC | Better performance on unstable networks |

| Serialization | Protobuf, MessagePack | Smaller payloads and faster parsing |

| AI Systems | Semantic caching | Up to 15x faster responses |

Different caching strategies support different use cases. Cache-aside works well for read-heavy systems, while write-through improves consistency for critical data. Refresh-ahead techniques help maintain low latency for predictable workloads. Eviction algorithms such as Least Recently Used and TTL policies help keep cache storage efficient.

Semantic caching has become especially important for AI systems. Instead of relying on exact matches, semantic caching uses vector embeddings to identify requests with similar intent. This can improve response times by up to 15 times and reduce LLM costs by as much as 90%.

API architecture is another major performance factor. Backend for Frontend architectures create dedicated backend layers for specific client types such as mobile or web applications. This allows optimized payloads and authentication flows, although it may increase operational complexity.

GraphQL provides another approach by allowing clients to request only the data they need. Compared with REST-based over-fetching, GraphQL can reduce payload size by 30–50% while also minimizing unnecessary network requests.

Transport protocols have also evolved. HTTP/3, built on QUIC, reduces head-of-line blocking by replacing TCP’s single stream with independent UDP-based streams. In unstable mobile environments, performance improvements can exceed 60% compared with HTTP/2. However, organizations still need reliable HTTP/2 fallback support because some corporate networks restrict UDP traffic.

Serialization format selection further affects performance. JSON remains dominant for public APIs because it is human-readable, but high-throughput systems increasingly use binary formats such as MessagePack and Protobuf. MessagePack can parse roughly 3.5 times faster than JSON, while Protobuf can achieve 6.5–7.5 times faster parsing and reduce payload size by up to 75%. staging environments to see how the rest of the system reacts. This practice moves the team’s mental model from “Hope it works” to “Know it recovers.”

AI, Governance, and Engineering Culture

Artificial Intelligence is now integrated across the entire software development lifecycle. AI tools assist with architecture, coding, testing, deployment, monitoring, and maintenance. They can identify bugs, generate test cases, detect bottlenecks, and support proactive incident management through AIOps platforms.

However, AI-generated code also introduces new risks. Generated outputs may contain insecure patterns, hidden bias, or inefficient architectural decisions. Developers increasingly act as system orchestrators who review, validate, and guide AI-generated solutions.

Performance governance is also becoming more formalized. Organizations increasingly use performance budgets to prevent feature creep from damaging user experience. Common 2026 targets include LCP below 2.5 seconds, INP below 200 milliseconds, API response times below 200 milliseconds, and JavaScript bundles under 300KB compressed.

Technical managers must also balance performance with infrastructure cost. While cloud computing provides flexibility and scalability, uncontrolled usage can significantly increase operational expenditure. Many organizations therefore adopt hybrid approaches, keeping predictable workloads on-premise while using public cloud platforms for elastic demand.

Finally, performance depends heavily on culture. Continuous learning, systems thinking, collaboration, and shared ownership of quality are becoming essential engineering practices.

Future Outlook: 2026–2030

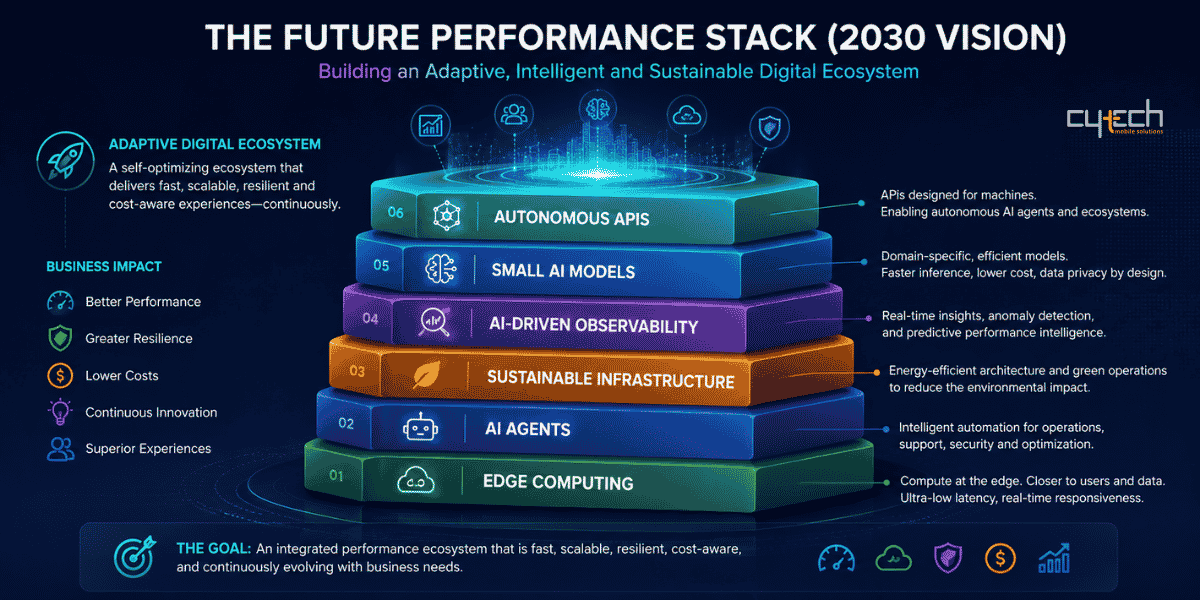

Looking toward 2030, performance optimization will increasingly focus on edge-native computing, sustainable software engineering, AI-driven observability, and specialized small AI models. Computing resources will move closer to users and data sources to reduce latency and improve real-time responsiveness. APIs will increasingly support autonomous AI agents rather than only human-facing applications.

At the same time, sustainable computing will become a major concern as organizations seek to reduce the energy impact of large-scale AI workloads. Smaller domain-specific models will also enable faster and more localized inference with lower infrastructure costs.

The organizations that succeed in this environment will be those that combine modular architecture, cloud-native infrastructure, disciplined performance governance, AI-driven operations, and a strong engineering culture. The future of software performance is no longer about optimizing isolated components. It is about building an integrated digital continuum that is fast, scalable, resilient, cost-aware, and capable of evolving continuously alongside business needs.

Bibliography

- HTTP/1.1 vs HTTP/2 vs HTTP/3 – Which One Are You Still Using in 2025?

- Caching Strategies To Improve Operational Performance And Cost Efficiency In Modern Computing Systems

- Frontend vs Backend vs Full-Stack: What’s Changing in 2026?

- Core Web Vitals in 2025: What Has Changed and What’s New

- What Is Real User Monitoring (RUM)? The 2026 Guide

- The Ultimate Frontend Performance Checklist for Modern Websites

- How AI Is Transforming the Software Development Lifecycle (SDLC)

- The Complete Frontend Performance Checklist for Developers

- An Expert’s Guide To Best Frontend Performance Best Practices

- Backend Performance Tuning: Techniques and Tools

- The complete guide to cache optimization strategies for developers

- Caching Strategies Across Application Layers: Building Faster, More Scalable Products

- Comprehensive comparison for Backend technology in applications

- How to Future-Proof Your Tech Stack for 2025 and Beyond

- Caching Strategies Explained: The Complete Guide

- BFF vs Aggregation Layer: Choosing the Right API Gateway Pattern for Modern Microservices

- GraphQL vs. REST at enterprise scale: Performance benchmarks

- HTTP/3 and QUIC in Production: A Practical Deployment Guide for 2026

- HTTP/3 for Microservices in 2025: QUIC, gRPC over h3, 0‑RTT Risks, and a Safe Migration Playbook

- JSON vs Protocol Buffers: Speed, Size, and Schema Tradeoffs

- Redis Serialization Best Practices (JSON vs MessagePack vs Protobuf)

- JSON vs MessagePack vs Protobuf in Go — My Real Benchmarks and What They Mean in Production

- 2025 Trends in Full Stack Development: Innovation Meets Agility

- 2025 Developer Survey

- AIOps in 2026, and beyond: Top 11 Use Cases, Examples, and Emerging Trends

- AIOps Software Options 2026

- Top 10 AIOps Platforms 2026: AI-Powered Observability

- IT Budget Planning 2026: How Tech Leaders Can Win in an Uncertain Year

- 2026 Business Technology Budgeting Guide

- The Strategic IT Budgeting Guide for 2026: Cut Waste, Increase Value, Plan with Confidence

- From check-ins to coaching: What performance management will look like in 2026

- 13 Practical Performance Management Strategies To Implement (In 2026)