Table of Contents

For over a decade, the cloud has been the undisputed center of gravity in digital infrastructure. Businesses moved workloads, data, and intelligence into massive hyperscale data centers, unlocking scalability and cost efficiency at unprecedented levels.

But today, that model is starting to bend.

A new paradigm is emerging—one that doesn’t replace the cloud, but redefines its role. Intelligence is moving closer to where data is created. This is the rise of edge-native software.

And it’s not just another architectural trend. It’s arguably the most important shift since the move from on-premises systems to the cloud.

From Centralization to Distribution (Again)

Technology has always moved in cycles.

We started with centralized mainframes, moved to distributed systems, then returned to centralized cloud platforms. Now, we’re entering a new phase—distributed intelligence at scale.

The reason is simple: the world is generating too much data, too fast.

IoT devices, cameras, sensors, and connected systems are producing massive streams of information. Sending all of it to the cloud is no longer viable—both technically and economically. Bandwidth costs, latency, and network congestion are becoming real bottlenecks.

Instead, organizations are shifting toward processing data at the source.

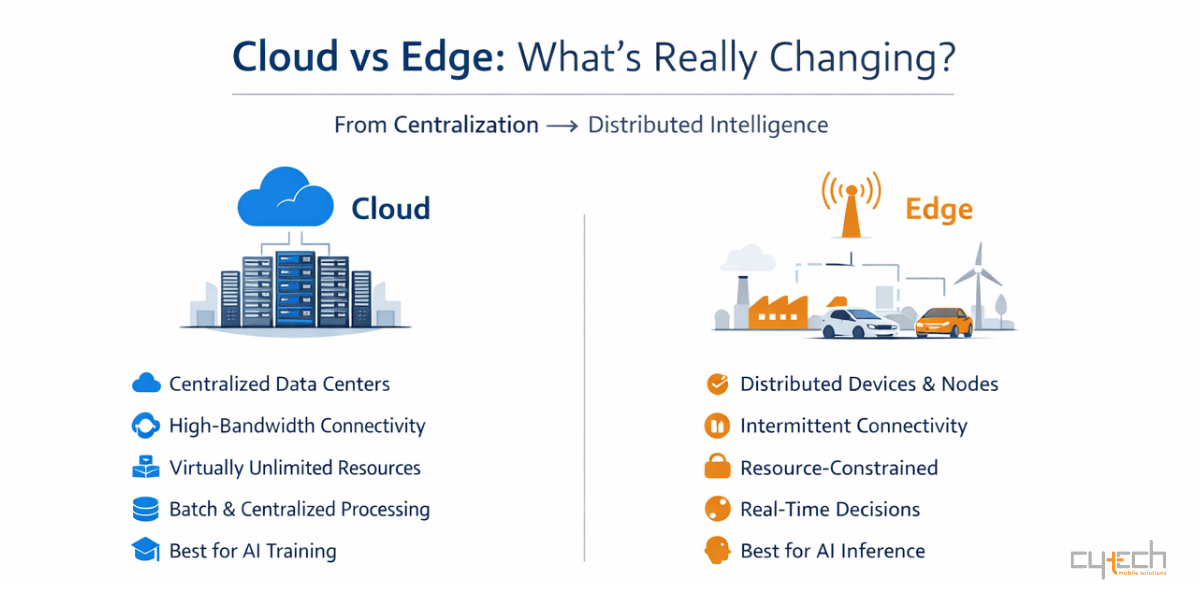

Cloud vs Edge: What Actually Changes?

The difference between cloud-native and edge-native architectures is not just location—it’s design philosophy.

| Feature | Cloud-Native | Edge-Native |

|---|---|---|

| Topology | Centralized data centers | Distributed across heterogeneous nodes |

| Connectivity | Stable, high-bandwidth | Intermittent, variable |

| Resources | Virtually unlimited | Constrained (CPU, memory, power) |

| Processing | Centralized analytics | Real-time local inference |

| Governance | Central control | Local autonomy |

| Primary Driver | Scalability & cost | Latency, privacy, real-time action |

The cloud still plays a critical role—especially for AI training and large-scale analytics. But execution is increasingly happening at the edge.

Think of it as a continuous loop:

Train in the cloud → Deploy at the edge → Learn from local data → Improve centrally.

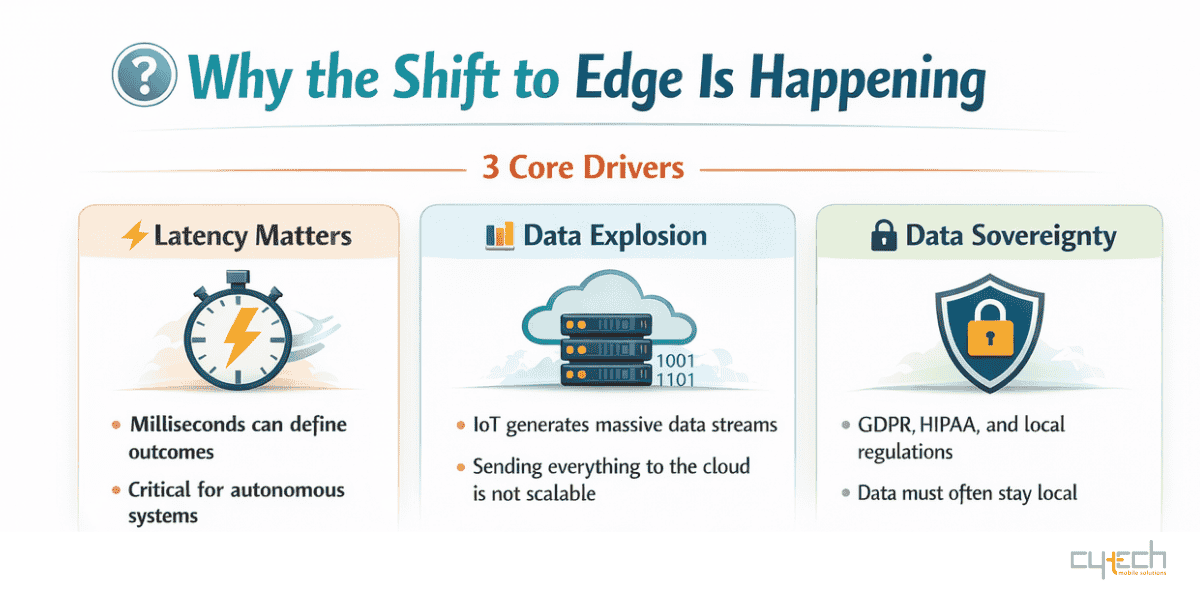

The Physics Problem: Latency & Bandwidth

At the heart of this shift is something fundamental: physics.

Data cannot travel faster than the speed of light. And in many applications, even milliseconds matter.

Take autonomous vehicles. At highway speeds, a car can travel several meters in the time it takes for a cloud round-trip. That delay is unacceptable for safety-critical decisions like collision avoidance.

The same applies in industrial environments. High-resolution cameras inspecting production lines generate enormous data streams. Sending all that data to the cloud would overwhelm networks. Processing it locally allows instant defect detection—while only insights are sent upstream.

Two forces are driving this transition:

- Time sensitivity → decisions must happen instantly

- Data volume → not all data can (or should) be centralized

And there’s a third factor gaining importance: data sovereignty. Regulations like GDPR and HIPAA increasingly require data to be processed locally, reinforcing the move toward edge architectures.

Rethinking the Software Stack

Building for sdecentralized computing is fundamentally different from building for the cloud.

Edge environments are messy. They include different hardware architectures (x86, ARM, RISC-V), limited power, and unreliable connectivity. Traditional cloud tools don’t always fit.

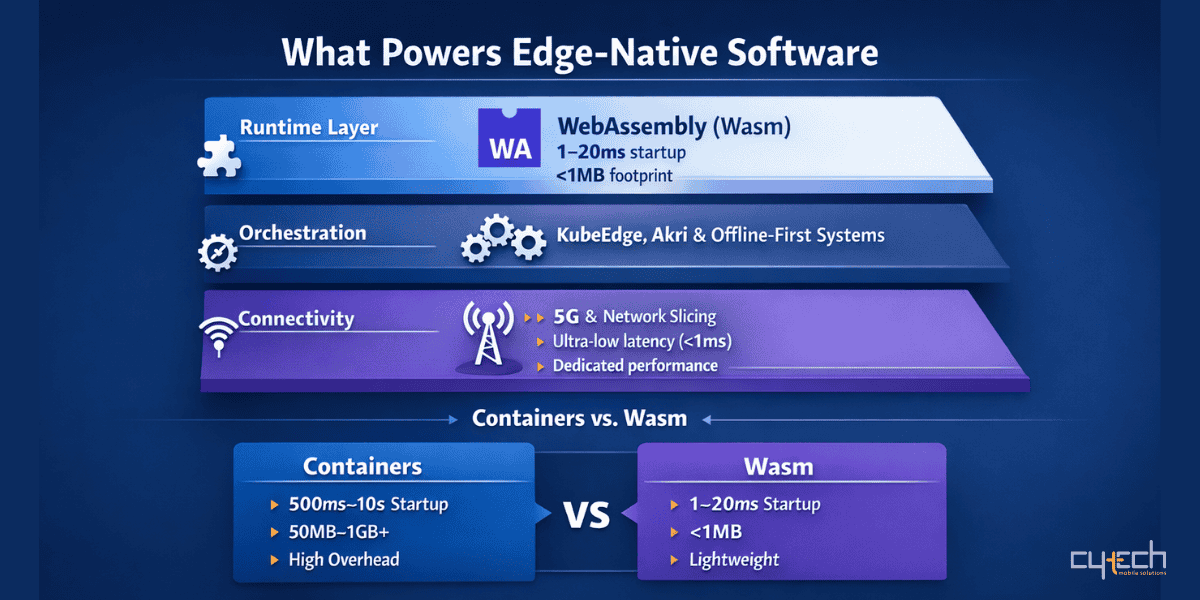

Why WebAssembly (Wasm) Matters

Containers transformed cloud computing—but at the edge, they’re often too heavy.

That’s where WebAssembly (Wasm) comes in (source).

| Runtime Characteristic | Containers (Docker) | WebAssembly (Wasm) |

|---|---|---|

| Startup Time | 500ms – 10s | 1ms – 20ms |

| Size | 50MB – 1GB+ | Typically <1MB |

| Isolation | OS-level | Sandbox |

| Portability | OS-dependent | Universal |

| Resource Usage | High | Minimal |

Wasm enables near-instant execution with minimal overhead, making it ideal for edge environments.

This is especially important for serverless distributed computing workloads, where applications need to scale down to zero to conserve energy—particularly on battery-powered devices.

Managing the Edge: Not Your Typical Kubernetes

Traditional orchestration tools like Kubernetes assume stable connectivity and abundant resources—two things the edge does not guarantee.

This has led to new approaches:

- KubeEdge extends Kubernetes to support offline-first environments

- Akri enables dynamic discovery of devices like cameras, sensors, and GPUs

- Edge systems can operate independently and synchronize later

This is a critical shift: systems must continue working even when disconnected.

5G: The Missing Piece

Edge computing wouldn’t scale without the right connectivity layer. That’s where 5G comes in.

But 5G is more than faster internet—it’s a programmable network (source).

| 5G Service Type | Key Capability | Edge Use Case |

|---|---|---|

| eMBB | High bandwidth | 8K video, AR/VR |

| mMTC | Massive device density (1M/km²) | Smart cities, IoT |

| URLLC | Ultra-low latency (<1ms) | Robotics, remote surgery |

Combined with distributed computing, 5G enables a multi-layer intelligence fabric:

Device layer → real-time decisions

Gateway layer → local aggregation

Network layer → high-performance processing

This architecture can achieve sub-5 millisecond response times, while keeping sensitive data local.

AI Moves to the distributed intelligence

Artificial intelligence is the main driver behind local processing adoption.

Training still happens in the cloud—but inference is moving to the endpoint processing.

This shift has led to the rise of specialized hardware like Neural Processing Units (NPUs), designed for high performance with low power consumption.

Unlike GPUs, NPUs are optimized for:

- Computer vision

- Natural language processing

- Multimodal AI (vision + audio + text)

This allows real-time decision-making directly on devices—from smart cameras to industrial robots.

The Hard Part: Distributed State

One of the biggest challenges in decentralized computing systems is managing data consistency across thousands of distributed nodes.

Event-Based Architectures

Instead of storing the current state, systems store a sequence of events.

This approach enables:

- Reliable synchronization.

- Offline operation

- Full auditability

Conflict-Free Synchronization

Technologies like CRDTs allow multiple devices to update data simultaneously—without conflicts.

| Strategy | Mechanism | Edge Benefit |

|---|---|---|

| Event Sourcing | Append-only logs | Offline resilience |

| CQRS | Separate read/write models | Performance optimization |

| CRDTs | Automatic conflict resolution | Multi-device sync |

| Local Cache | On-device storage | Instant response |

Middleware like Dapr simplifies this complexity by providing reusable building blocks for distributed applications.

Security in a World Without Perimeters

Edge environments are inherently more exposed than centralized data centers.

Devices may be deployed in factories, streets, or remote locations—making them vulnerable to physical access and tampering.

The solution? Zero Trust architecture.

Key principles include:

- Hardware-based identity (TPMs)

- Distributed secrets management

- Automated patching and updates

- Autonomous operation during attacks

The goal is simple: even if one node fails, the system survives.

Real Business Impact

Edge-native architectures are not theoretical—they are already transforming industries (source).

| Industry | Use Case | Impact |

|---|---|---|

| Manufacturing | Predictive maintenance | -67% downtime, -24% costs |

| Healthcare | Real-time monitoring | Faster response, better privacy |

| Smart Cities | Traffic & safety systems | Real-time optimization |

| Logistics | Fleet coordination | Instant decision-making |

| Agriculture | Autonomous monitoring | Works without connectivity |

The common thread? Real-time intelligence creates real value.

What Comes Next?

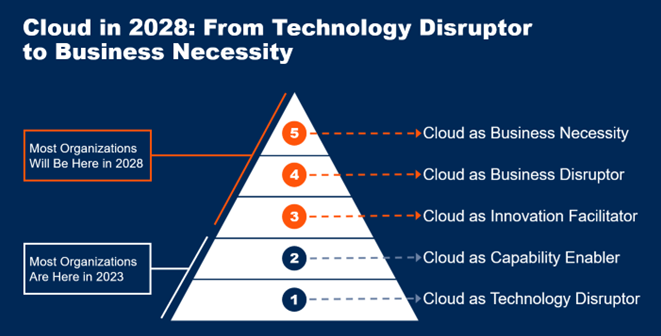

By 2028, more than 95% of new digital workloads are expected to be cloud-native—but increasingly distributed across edge environments (source).

The future is not cloud vs edge. It’s cloud + edge working together.

We’re moving toward:

- AI-driven workload placement

- Energy-efficient computing (Wasm, scale-to-zero)

- Edge-first application design

- Fully autonomous systems

Final Thought

The cloud is no longer the destination—it’s part of a broader ecosystem.

The real innovation is happening at the edge, where data is created, decisions are made, and value is realized instantly.

Edge-native software isn’t just an evolution.

It’s a fundamental shift toward a more responsive, intelligent, and decentralized digital world.